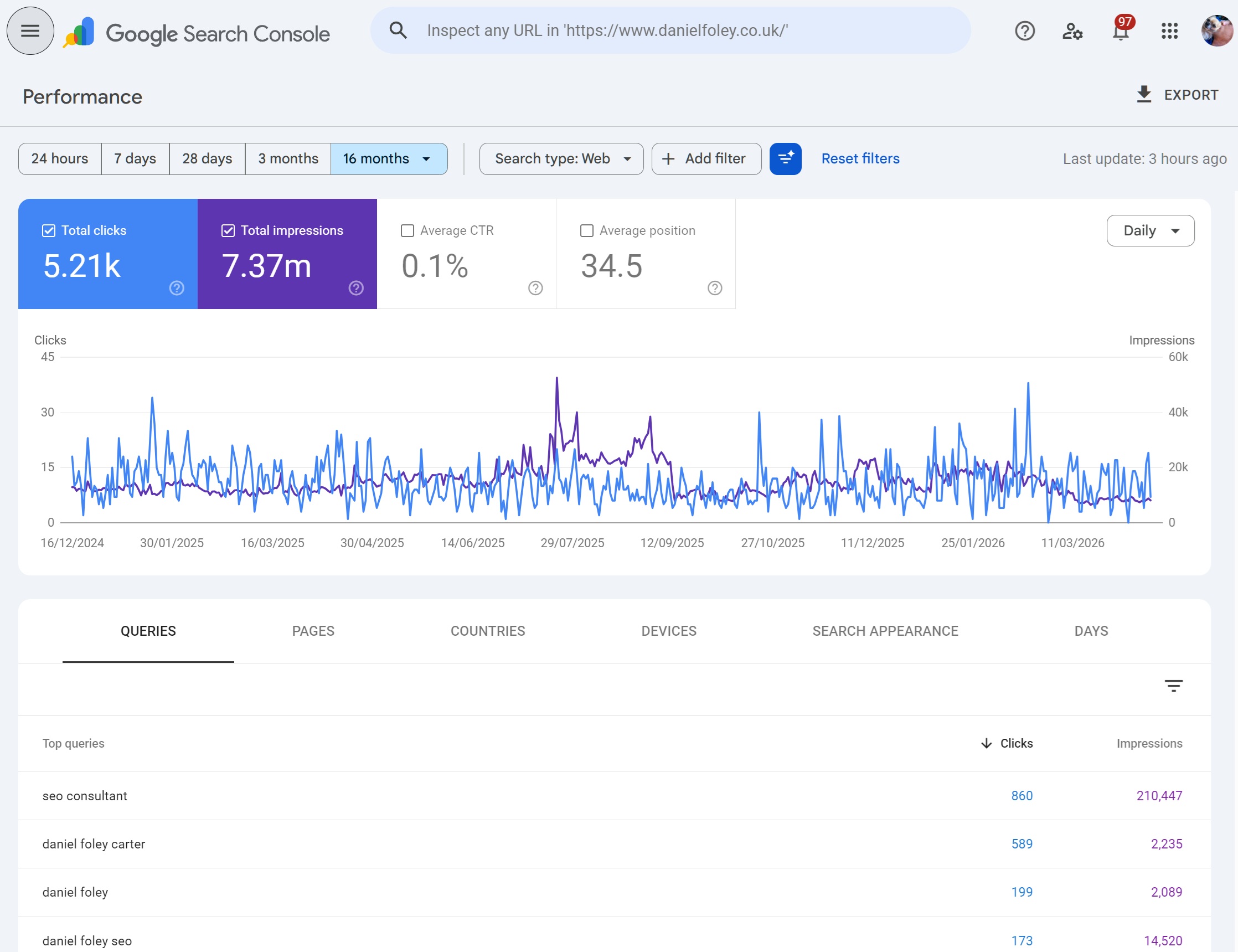

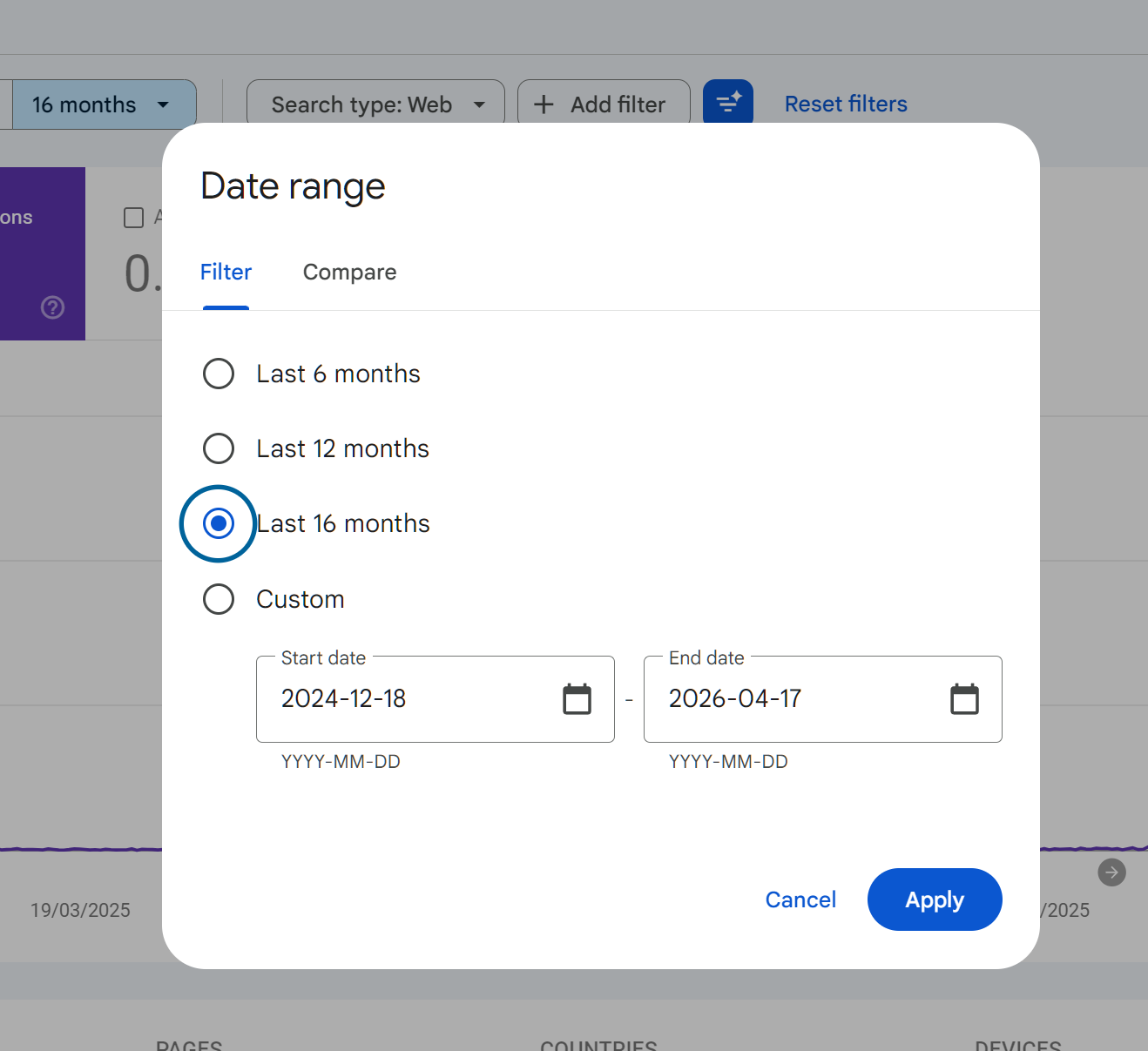

A lot of SEOs who have tried to build a longer-term performance story for a client has hit this wall. You login to search console, open the Performance report, you select last 16 months and that's it.

Want data beyond that? well, unless you've been backing it up in bigquery it's gone, this is where I know a lot of SEOs typically turn to SEMRUSH or AHREFS / Sistrix.

Quite a few SEOs have asked about this, so I thought I'd write down what's actually going on, what Google has said about it (not much), and what actually works as a workaround. I've tried most of the options over the years and watched a few of them fail. This is the honest version rather than the vendor pitch.

What the limit actually is

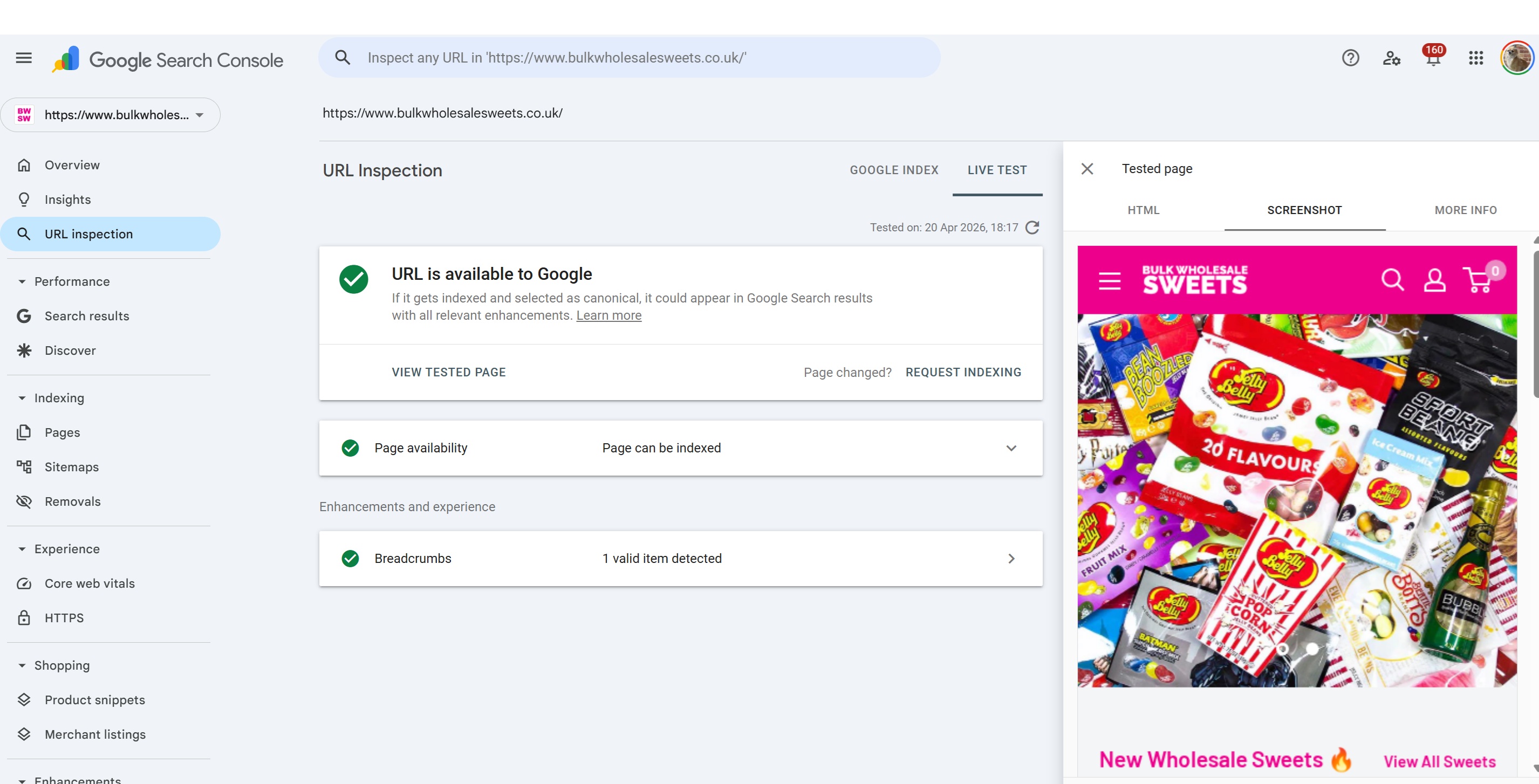

Google Search Console operates on a rolling 16 month window for performance data. That covers clicks, impressions, CTR, average position, queries, pages, countries, devices and search appearance. Every day you gain a new day at one end and lose a day at the other. The window never goes beyond this. Once a date drops out the back, it's deleted from Google's databases permanently and there is no way to get that data back. The only way you get a chance if you use a data warehousing solution like Google's BigQuery (which you can enable in search console) or you can use tools like SEO Stack or SEO Gets.

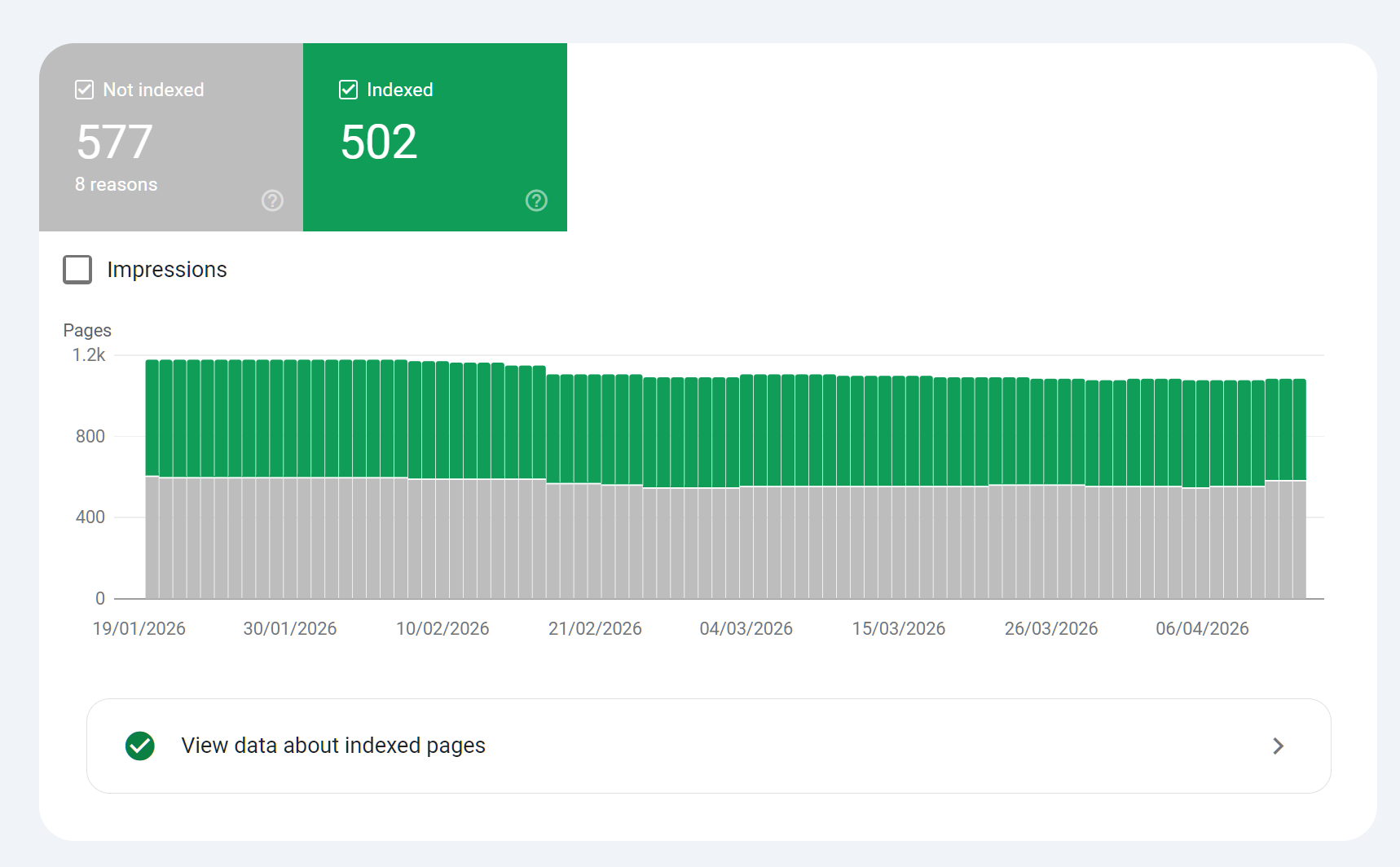

Worth knowing: The 16 month timeframe is for performance data, other parts of Google Search Console such as page indexing only show the last 3 months.

The 16 month number has been in place for a long time. In reality, there's no reason for Google to extend this - it costs them money and in their view, 16 months gives webmasters "sufficient" data to work with.

What Google has actually said about it

This is where it gets thin. Google has never published a clear explanation for why the retention period is 16 months. Not 12, not 18, not 24. You'll see the number mentioned in the help documentation as a fact, but not as a position with reasoning behind it.

The clearest public statement came from John Mueller in May 2023, when people asked whether the new BigQuery bulk export feature could pull historical data. Mueller confirmed on Twitter that there are "no plans" for historical export. BigQuery only captures data going forward from the day you turn it on. Anything already inside the 16 month window stays there, and when it ages out, it's gone from both places.

So we're left to infer from how Google operates more broadly.

The probable reasons

Having built and run an SEO analytics platform myself, I can make a reasonable guess at what's driving this.

Storage and query cost.

Search Console is free. Google serves billions of rows to hundrds of millions of properties globally, and every report in the UI is effectively a live query. Keeping years of per-query, per-page, per-country, per-device data for every verified property would be an enormous infrastructure bill for a product that generates no direct revenue. 16 months is the compromise that gives you year-over-year comparison without committing Google to indefinite retention.

We know first-hand when we were building SEO stack, it's insidious how much data is actually stored, there are 16 dimensions across the board - so you imagine for each day every URL on your site that's served will have query data, then there's all the metrics such as impression, CTR, position, device, search appearance, country etc.

So - for Google it's not economically viable to uncap this - it's also one of the reasons why you can only access the top 1000 rows for any applied filter, because it costs Google to process that data.

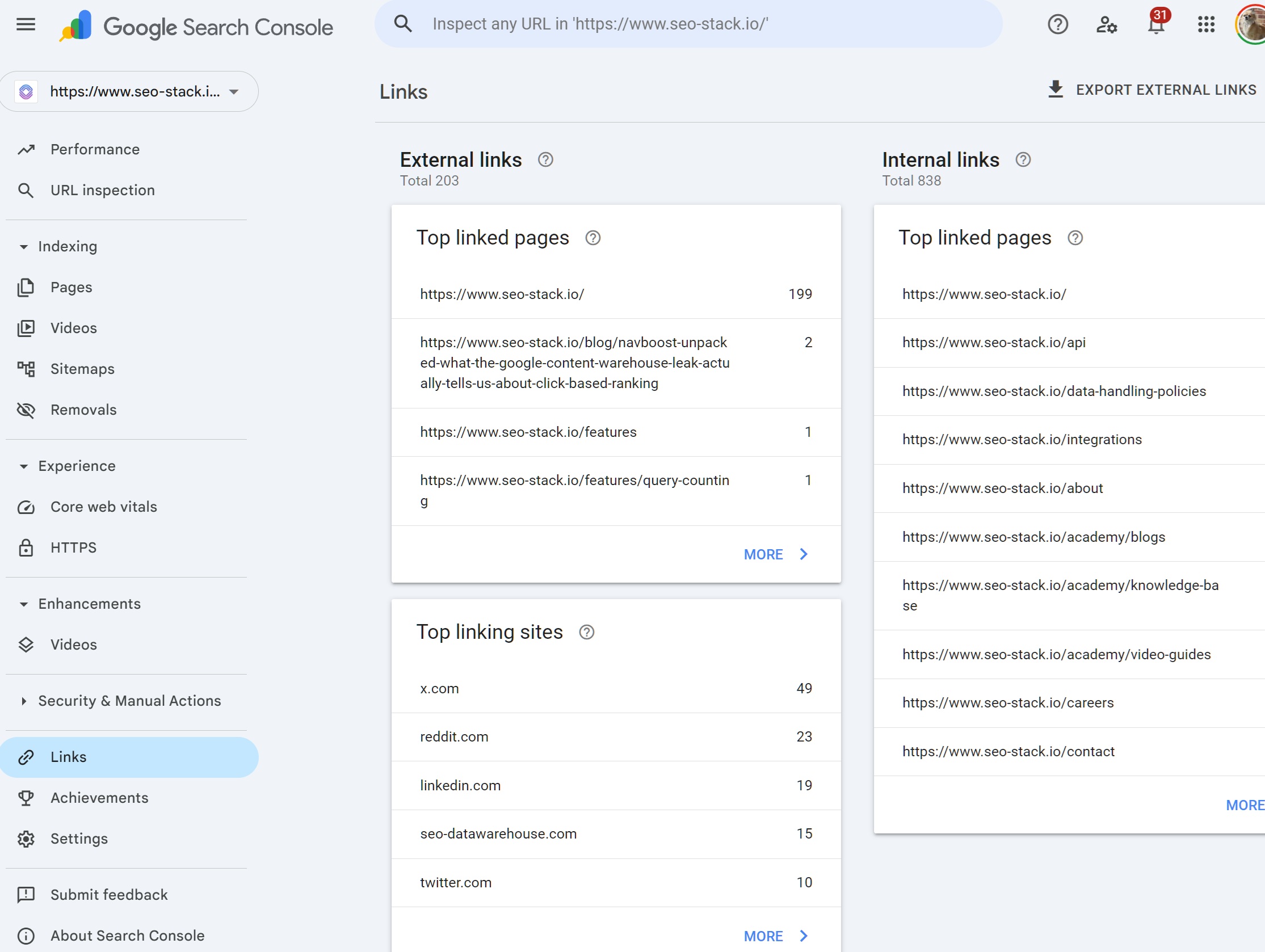

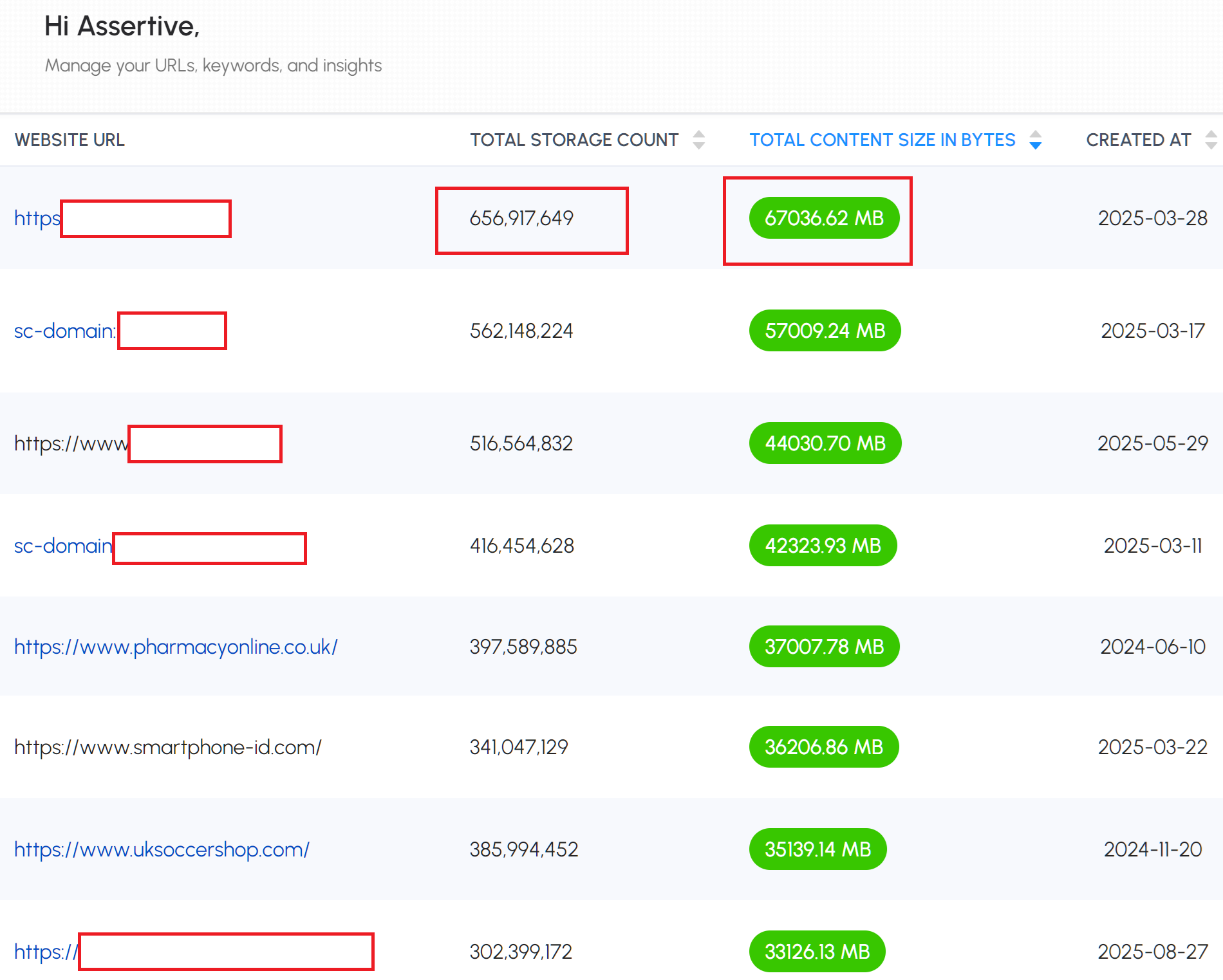

In SEO Stack you can see JUST how big datasets for a SINGLE website can get! This is EXACTLY the reason why Google search console does not go beyond 16 months and 1000 rows.

Look at this snapshot, 1 website with warehoused search console data in SEO stack has 656,917,649 rows of text data totalling 67 gigabytes! that is 1 website! When you open this website in SEO stack we have to process every single record so that we can offer mass sorting and query filtering across the entire data-set, unlike GSC which will only give you sampled data.

We've learned the hard-way, and, subsequently this is how we understand why Google has imposed strict limits on timeframes and query counts.

Speed.

If you've ever filtered a large GSC property by a long date range, you'll have noticed the UI gets sluggish. Extending the window to multiple years without re-architecting the storage layer would make the tool painful to use. Google would rather ship a fast free tool than a slow one with more history.

Keep in mind, Google is STILL only extracting the top 1000 rows of data and is sampling data, despite how powerful Google search console is, the performance is generally slow for the limited amount of data it gives you.

SEO Stack processes over 10,000,000 rows of data a second, far outpacing Google Search Console. By the time GSC has processed 1000 queries SEO stack will have processes over 1 million.

Data minimisation.

Speculative but credible. Regulators in the EU and elsewhere have been pushing hard on retention periods for anything involving query-level data. Aggregated search performance data is less sensitive than individual user logs, but Google's general direction of travel is retain less, for shorter. 16 months fits that pattern.

The ecosystem.

The polite version: Google provides the raw data window and lets third party tools build longer-term analysis on top of it. The cynical version: if GSC stored five years of clean first-party data for free, the commercial case for Ahrefs, Semrush and the rest gets harder. I don't think ecosystem protection is the primary driver here, but I'd be surprised if it wasn't on the list somewhere.

None of this is confirmed. Google's formal position is essentially "16 months is what you get." Everything else is informed guessing.

Their stance is - we'll give you enough data to work with, if you want more you'll need to use their API or use Google BigQuery - but, storing data in bigquery is one thing, getting out requires an understanding of SQL or for you to build your own UI (or hook into data studio).

Why BigQuery didn't actually solve the problem for me

When Google announced the bulk export to BigQuery in February 2023, I set it up on my agency's site and on a few client properties the same week. The feature itself is genuinely useful. You get unsampled, full row count data written into your own BigQuery project every day, including the long-tail queries that the UI hides behind its 1,000 row limit. For my own analytics work it's been valuable.

But it does not backfill. Not a single day.

I had a client who wanted a deck showing traffic patterns back to 2020 (yes there is GA4 but it was missing a huge amount of data). I already knew the historical data wasn't available, and as such, I don't really like giving clients third party data from AHREFS or SEMRUSH, primarily because of the sheer gaps between 1st party data (GSC) and 3rd party data (SEMRUSH/AHREFS etc). The day you turn the export on for bigquery is day zero. Every day after that you accumulate history. Before that, nothing.

We'd started building SEO stack before Google announced bigquery integration into GSC because prior, you could only pull GSC data over API and then you'd have to build your own architecture around that to store and process the data (and I can tell you from experince it was NOT easy) - GSC is a lot more complex than it looks, it took us re-building it to figure that out.

The practical consequence is that BigQuery only solves the problem for you in future tense. If you set up the export today, in 16 months' time you will have broken free of the rolling window for the first time, with 16 months from GSC plus whatever you've accumulated in BigQuery beyond that. After three years of running the export you'll have three years of history. It works, but it's a slow build, and the cost is that you have to have decided to do this eighteen months before you actually needed the data.

The lesson I've taken from this, and the one I push on every new client now: turn on the BigQuery export on day one of the engagement, not on the day someone asks for a five-year trend. By the time they ask, you've already lost the months you didn't export. We also encourage clients to use SEO Stack primarily because we offer a much better architecture for data warehousing and processing.

One other thing. BigQuery export has a roughly 48-hour lag before the first data arrives, and it doesn't include every report type. Coverage data, for example, isn't in the export. If you need the technical stuff archived too, you need a separate process for that.

Why Ahrefs and Semrush were not a substitute

The obvious fallback when you can't get historical data from Google is to pull it from a third party tool. Ahrefs and Semrush both show estimated organic traffic going back years. If you need a chart for a pitch deck and you don't care much about precision, it is the most viable answer.

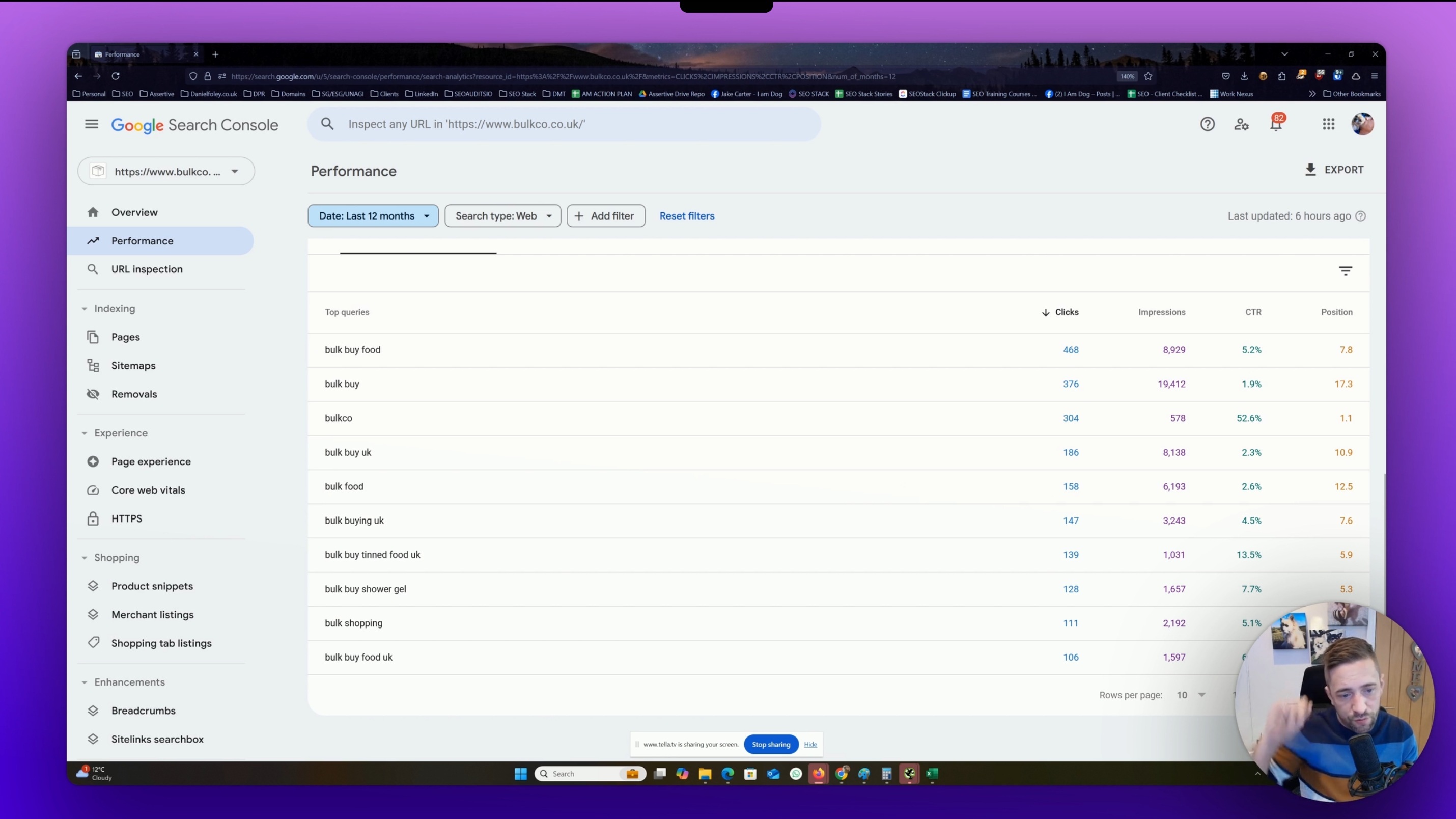

I tried this properly on a project where I had full GSC access and wanted to cross-check the numbers. The results were rough enough that I stopped using them.

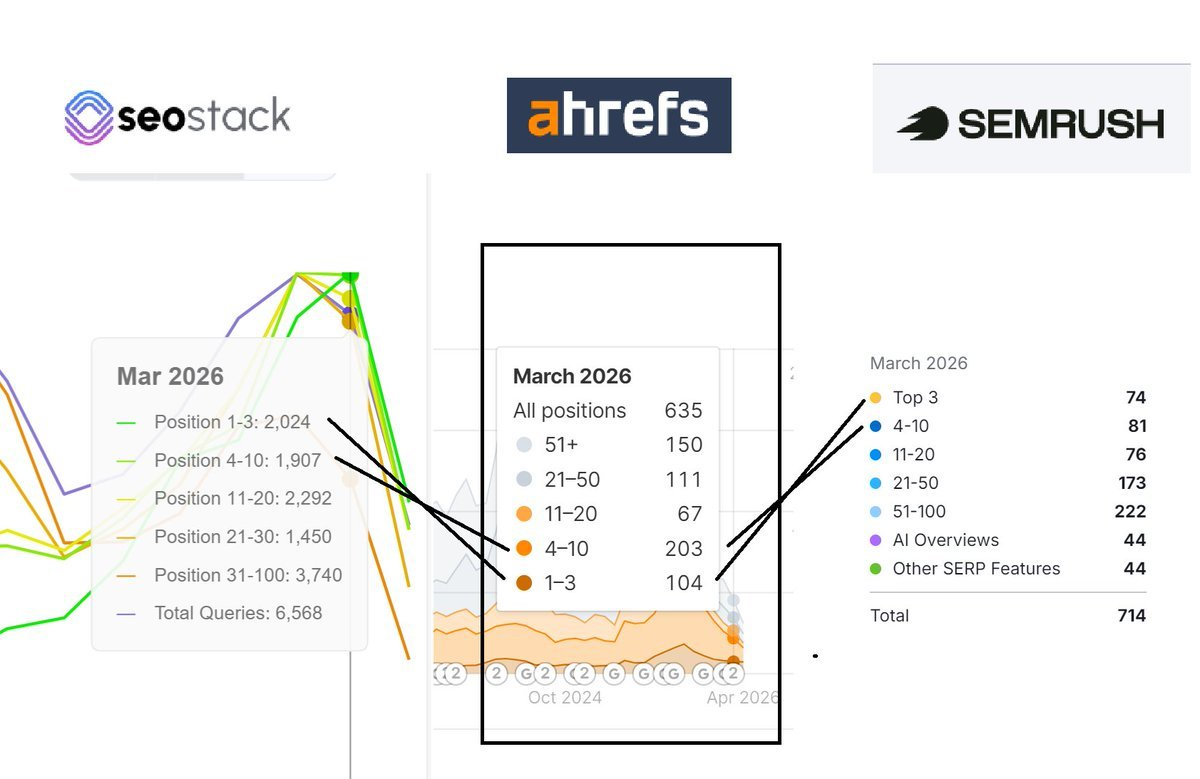

Here I showed the sheer gap between a client websites query counts in SEO Stack (which is warehoused GSC data without limits) against AHREFS and SEMRUSH and, well the numbers speak for themselves.

For the domains I compared, the Ahrefs and Semrush traffic estimates were off by margins that made them unusable for anything decision-critical. That matches independent research: a study by Collaborator comparing 184 websites put the average error margin across Ahrefs, Semrush and Similarweb at around 50% against GSC actuals, with Ahrefs coming out top at about 48% error. A separate piece from Serpple put Ahrefs' average discrepancy at around 22% in their sample. Either way you cut it, you're looking at a tool that's directionally useful and numerically unreliable.

The reason is structural, and it's worth understanding rather than just dismissing:

Their keyword universe is a sample, not exhaustive. They track a defined keyword set, not every query that ever sent a click to your site. Third party tools struggle with a HUGE volume of ZSV (zero search volume) queries

Their position data is a snapshot, not a continuous record. Keywords you ranked for in a volatile window get missed.

Their search volume figures are estimates themselves, often lagged and sometimes wrong by a wide margin, particularly for smaller markets and long-tail terms.

Click-through rate is modelled, not observed.

Multiply four estimates together and the result has very little to do with what actually happened in Google's logs. For competitor analysis, market sizing, or spotting directional trends, Ahrefs and Semrush are still fine. For filling in missing GSC history on your own site, they're not accurate enough to present to anyone who is going to check the numbers.

I use AHREFS and have used SEMRUSH in the past - they are great for "guidance" when trying to run competitor comparisons, but, the reality is, the numbers are way out.

What to do about it

Three things, in order of importance.

Turn on the BigQuery bulk export today for every property that matters. It costs very little in storage terms for a normal site, and it's the only mechanism Google themselves provide for keeping data beyond 16 months. The cost of wishing you'd done this a year ago is a lot higher than the BigQuery bill.

Or use SEO Stack or SEO Gets for your data storage + UI.Keep a parallel monthly CSV export as a belt-and-braces archive. If BigQuery gets disconnected, the project gets reshuffled, or an owner leaves and the permissions break, you'll still have the monthly snapshots. I've seen BigQuery configurations go sideways enough times that I don't rely on it alone.

Use third party tools for what they're actually good at. Competitor visibility, backlink discovery, keyword universe expansion, rough market sizing. Don't use them as a replacement for your own GSC history, because they aren't one.

The 16 month window isn't going to change. Google had the obvious opportunity to extend it when they launched BigQuery export and they chose not to. The sensible assumption is that the limit stays where it is, and the responsibility for longer retention sits with the site owner. That's annoying but it's the working reality, and the earlier you act on it the less history you lose.

Ready to transform your SEO?

Join thousands of SEO professionals using SEO Stack to get better results.

Start Free 30 Day Trial