Let me preface this by saying I've been in SEO for over 25 years. I've watched the industry go through every conceivable cycle of hype, panic, rebranding, and eventual normalisation.

From PageRank obsession, to link building witch hunts, to "content is king" (still waiting for the coronation), to whatever RankBrain was supposed to do to us all.

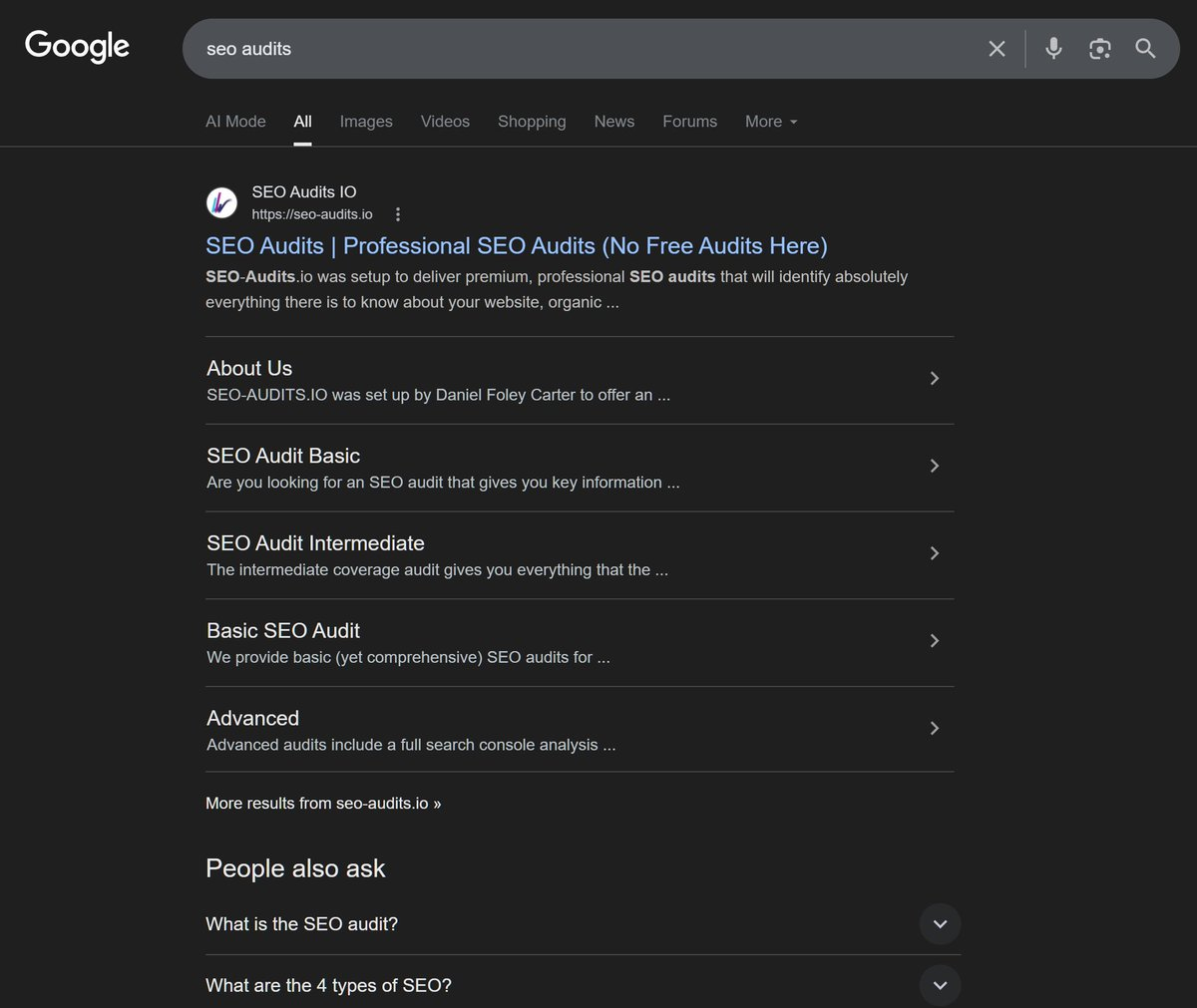

I watched everything from Google's Bigdaddy update through to the DEATH OF EMDs (yeah, right, Google still loves em, check it out, Google "SEO Audits" and you should see my EMD Ranking)

Oh how I do miss Matt Cutts

Anyway, I digress, so now, right on schedule, we have our latest identity crisis: Generative Engine Optimisation. Or GEO. Or AEO. Or LLM-SEO. Pick your acronym there's a consultant somewhere ready to sell you a retainer around it.

I threw this together, what do you think?

So let me actually break down why I think a lot of the noise around GEO is either premature, misleading, or both.

The Platform Problem: You're Not Tracking One Thing, You're Tracking Five, or Six?

Here's a fundamental issue nobody seems to want to address head-on.

Traditional SEO had one dominant platform. Google. Yes, there was Bing. Yes, there was Yahoo back in the day. But realistically, if you ranked well on Google, you were doing SEO. The measurement, the tooling, the strategy it all converged on one target.

It was VERY SIMPLE, you either PAID to advertise on Google, you ranked on Google or you did both.

GEO doesn't have that luxury.

ChatGPT currently holds around 68% of the AI chatbot market share, but that's down dramatically from 86.7% just twelve months ago. source: Vertu

Over the same period, Google Gemini surged from 5.7% to 21.5%.

Source: ALM Corp

Meanwhile you've got Perplexity doing its own thing, Grok pulling from X's firehose of real-time social data, and Claude operating on entirely different training and retrieval logic. Each of these platforms has its own pre-training data, its own RAG configuration, its own citation behaviour, its own way of deciding what gets surfaced and what doesn't.

Don't forget also that:

1. LLMs have different models that are forever changing? one minute we have chatGPT 4o, then 5.2, then 5.3, or perhaps you are using Sonnet or Opus (or soon to be mythos)

2. Personalisation / memory - this makes a big difference

3. Location

4. Generation of content is NOT linear or consistent, it changes

And to boot: research shows 89% of citations differ depending on whether you ask ChatGPT or Perplexity. source:Exposure Ninja

So when a GEO agency tells you they're going to "optimise your brand's AI visibility" ask them which AI, because the answer matters enormously and there's no unified playbook.

Perhaps you could ask them:

Outside of SEO, what GEO tasks will you be doing?

How will you measure performance?

How do you contend with wildly varying "visibility" scores across different tracking platforms?

Remember you're not tracking one search engine. GEOs are trying to optimise for five different black boxes with five different algorithms, five different data sources, and five different personalities (that's assuming they focus on the major players and aren;t looking further affield i.e. deepseek etc.)

The Query Length Problem: Welcome to the Wild West of Prompt Tracking

In traditional SEO, rank trackers work beautifully for shorter, defined keyword combinations. "SEO consultant London." "Buy running shoes UK." Clean, repeatable, trackable. You pop them into Semrush or Ahrefs, AWR, SERanking, check your position each week, report to your client, everyone's happy.

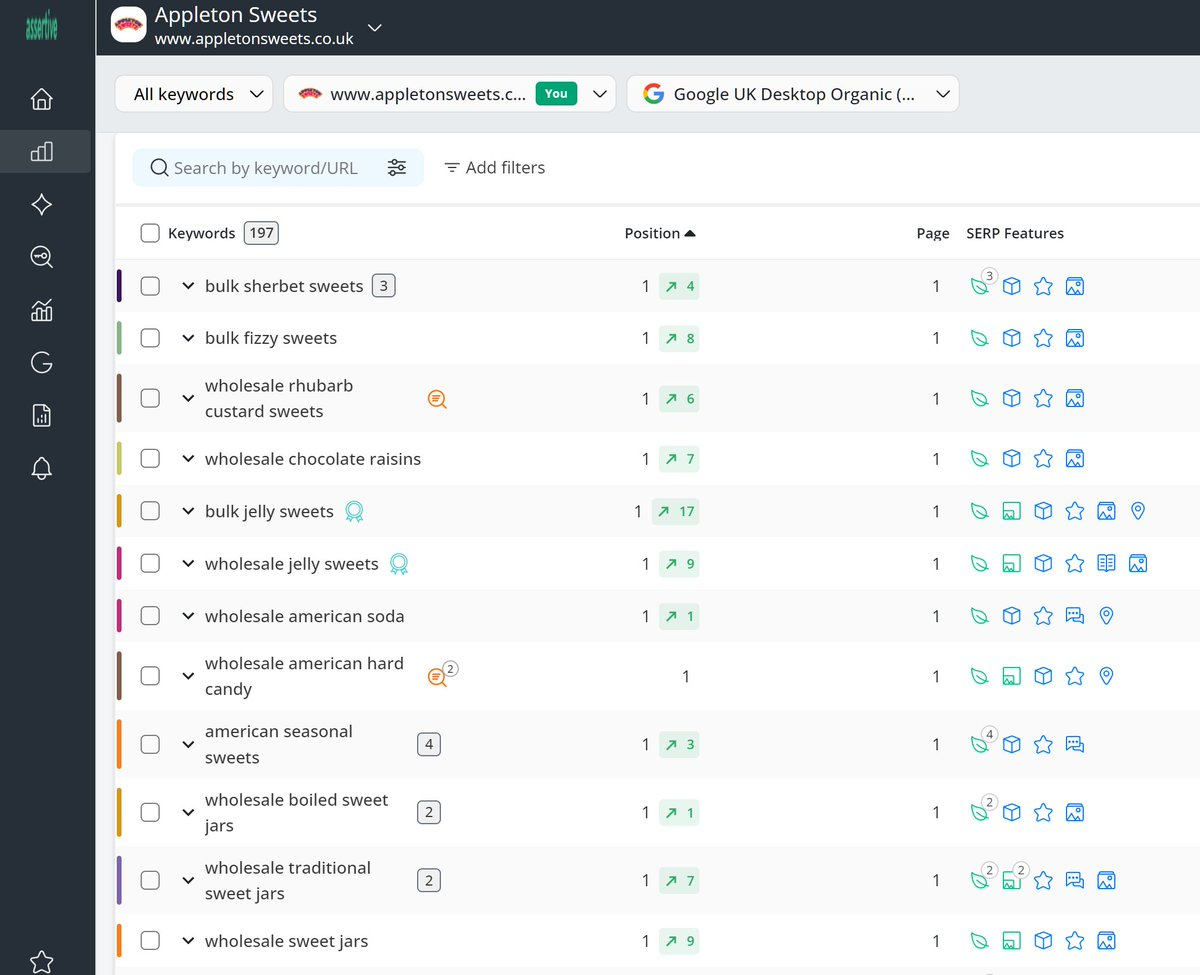

We used AWR back in the day, fantastic platform! but we've moved all our tracking to

www.seo-stack.io because it's our own and because it can track everything in the top 100 results of the big G.

AI users don't search like thatSomeone using ChatGPT or Perplexity might type: "What are the best SEO consultants in the UK who specialise in enterprise technical SEO and have experience with data warehousing platforms?"

Now tell me how do you track that?

What prompt do you put in your GEO tracker?

Because as the query gets longer, the probability of that exact query being entered again by someone else plummets.

Even in traditional Google search, about 15% of daily searches are queries that have never been seen before Kartikahuja and that's with queries averaging just 3–4 words. Multiply those words by five or ten in a conversational AI prompt, and the combinatorial explosion of unique queries becomes effectively immeasurable at any meaningful scale.

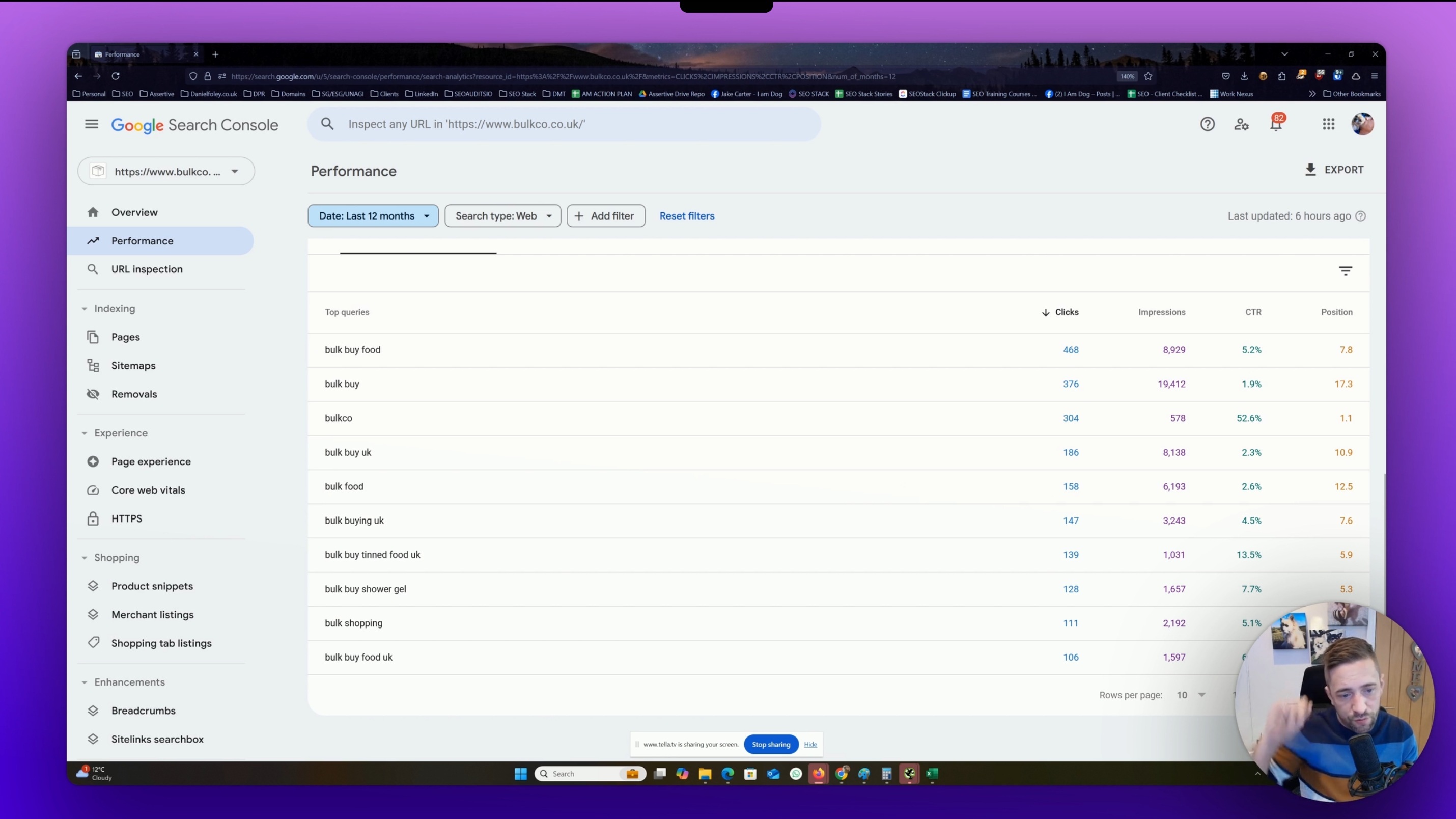

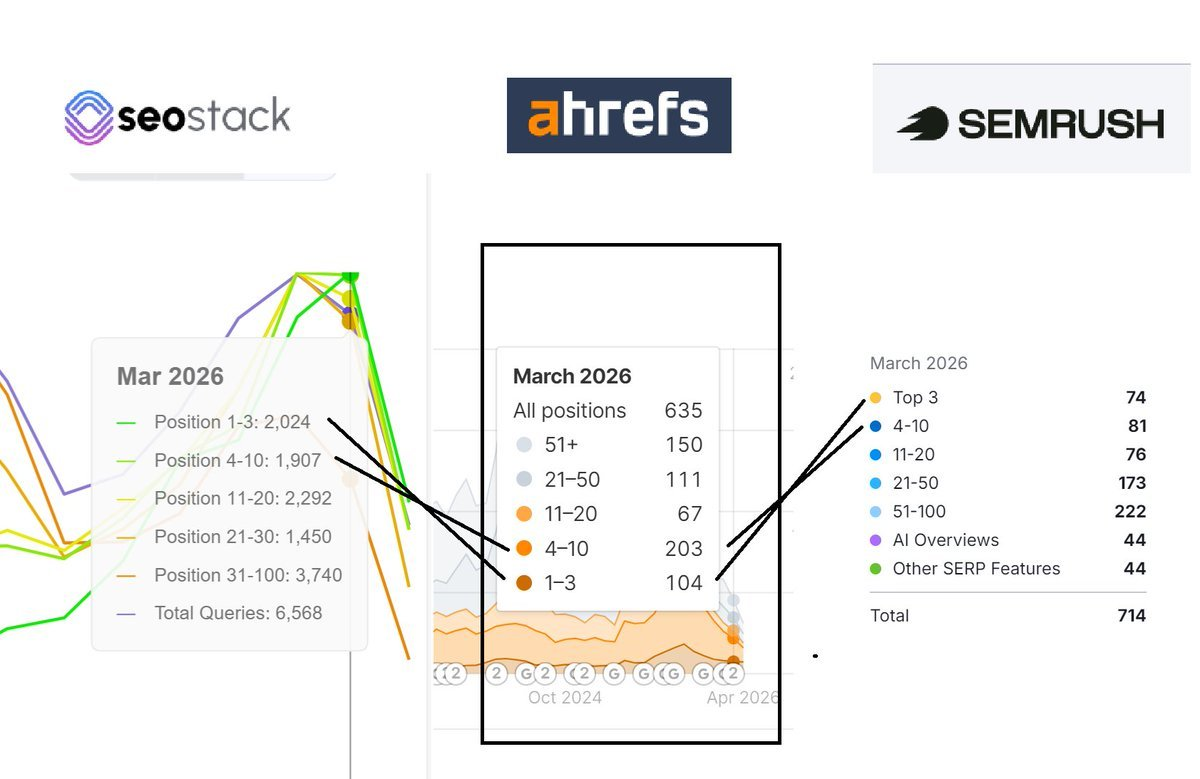

Also - consider the SHEER volume of ZSV queries (zero search volume) - even some of the worlds LARGEST SEO TOOLS such as AHREFS and SEMRUSH only capture a small portion of the data (as shown here comparing SEO Stack, AHREFS and SEMRUSH keyword counts for my own

website)

Look at the SHEER Size gap in the data!

AHREFS and SEMRUSH are by far, the BIGGEST holders of SEO data in the world (aside from search console) - but, if these tools are missing out on 70-80% of searched queries, imagine what it's going to be like trying to track AI PROMPT / CITATION performance?

You could probably add 1000 queries where a large chunk likely never get searched? You could base your ENTIRE GEO spend on tracking performance for queries people aren't searching.

And let's not forget, WHERE ARE GEO tools getting their prompt search volume data from?

Google gives us google search console

chatGPT, Gemini, Grok, Perplexity, Claude - none of them have an equivalent "search console interface with keyword data as yet".

Prompt tracking tools are attempting to sample from an infinite universe.

They're pulling a handful of queries, checking whether your brand is mentioned, and calling that "visibility." It's methodologically interesting at best. At worst, it's giving clients a number that means very little while charging them real money to monitor it.

The Uniqueness Problem:

Most AI Queries Are One-Off Events

This follows from the point above, but it deserves its own section because it's genuinely significant and I don't see it discussed enough.

The longer a search query gets, the more unique it becomes. That's not an opinion that's basic probability. If I'm tracking "SEO consultant," there are hundreds of people searching that every month - but, at least I can see that data.

That's a market. If I'm tracking "what SEO consultant should I hire if I'm running a B2B SaaS platform targeting mid-market US companies and I've already tried content marketing," that query might be entered by three people this year, two of whom are doing research for a blog post about GEO.

This matters enormously for any kind of performance measurement framework. In SEO, we built measurement around the fact that keyword volumes are real and repeatable.

The GEO equivalent doesn't exist in the same way. Even if your brand is cited beautifully in response to a 40-word AI prompt today, the chance of the same prompt being entered tomorrow let alone by a potential customer is vanishingly small. The signal-to-noise ratio in prompt-based tracking is going to be a genuine problem for anyone trying to run serious performance reporting.

The Analytics Black Hole: No Data, No Proof, No Problem (Apparently)

Here's a fun one. In SEO, we have Google Search Console. Imperfect, yes, but it gives us impressions, clicks, average position, CTR. We have GA4. We have Ahrefs and Semrush for competitive intel. We can actually demonstrate what's happening.

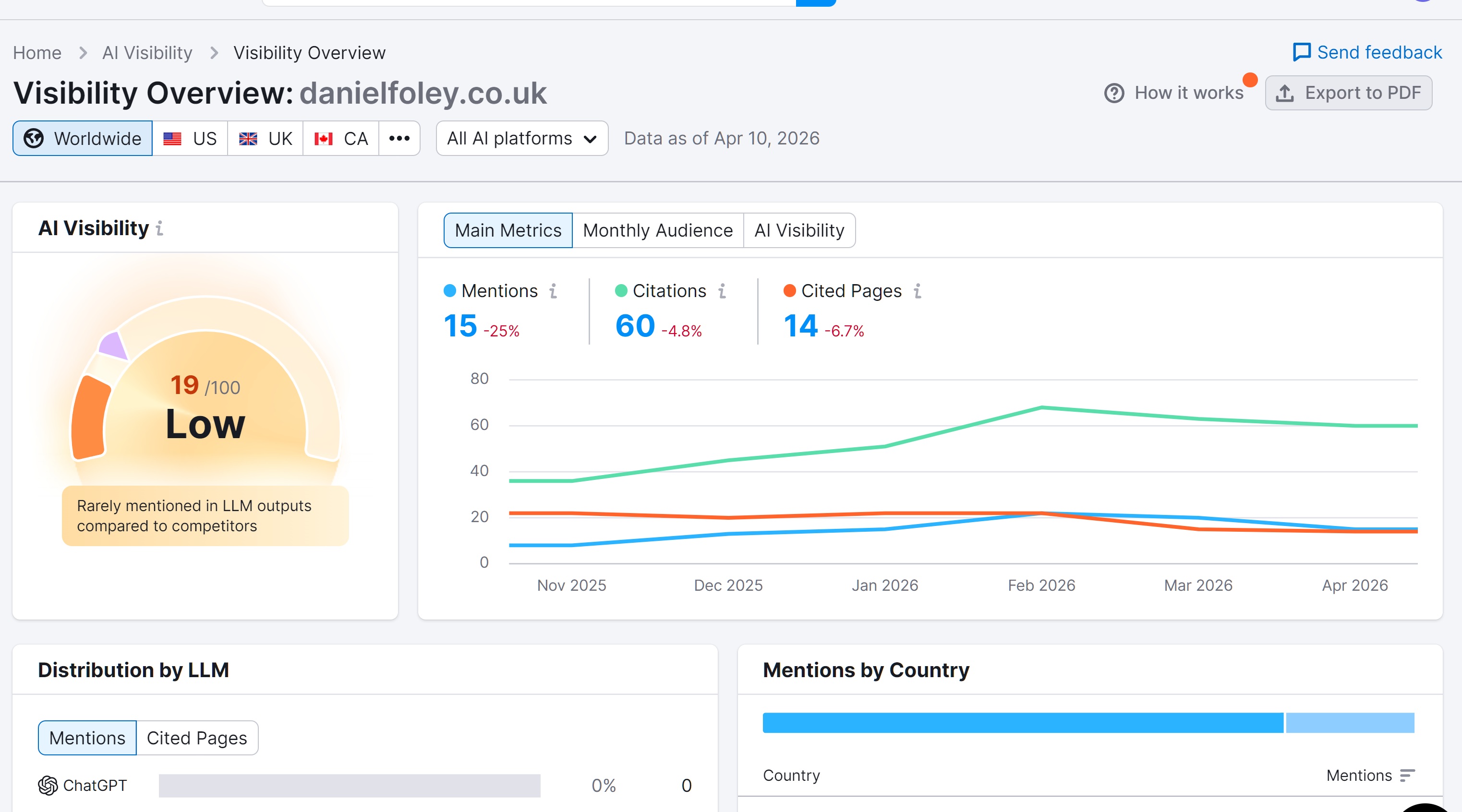

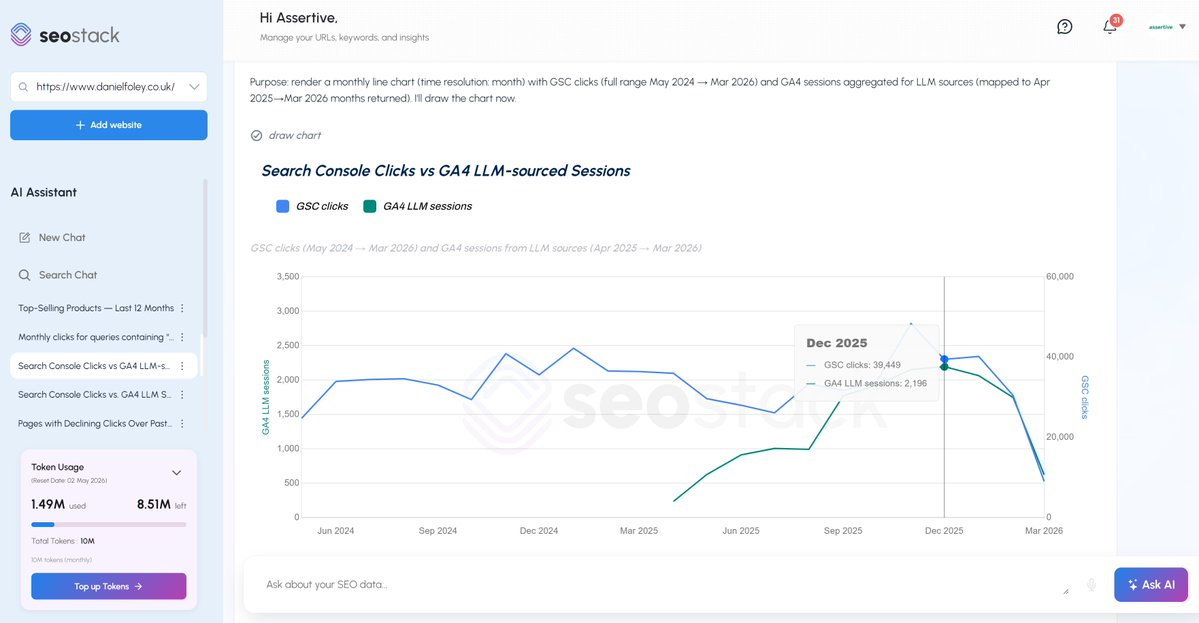

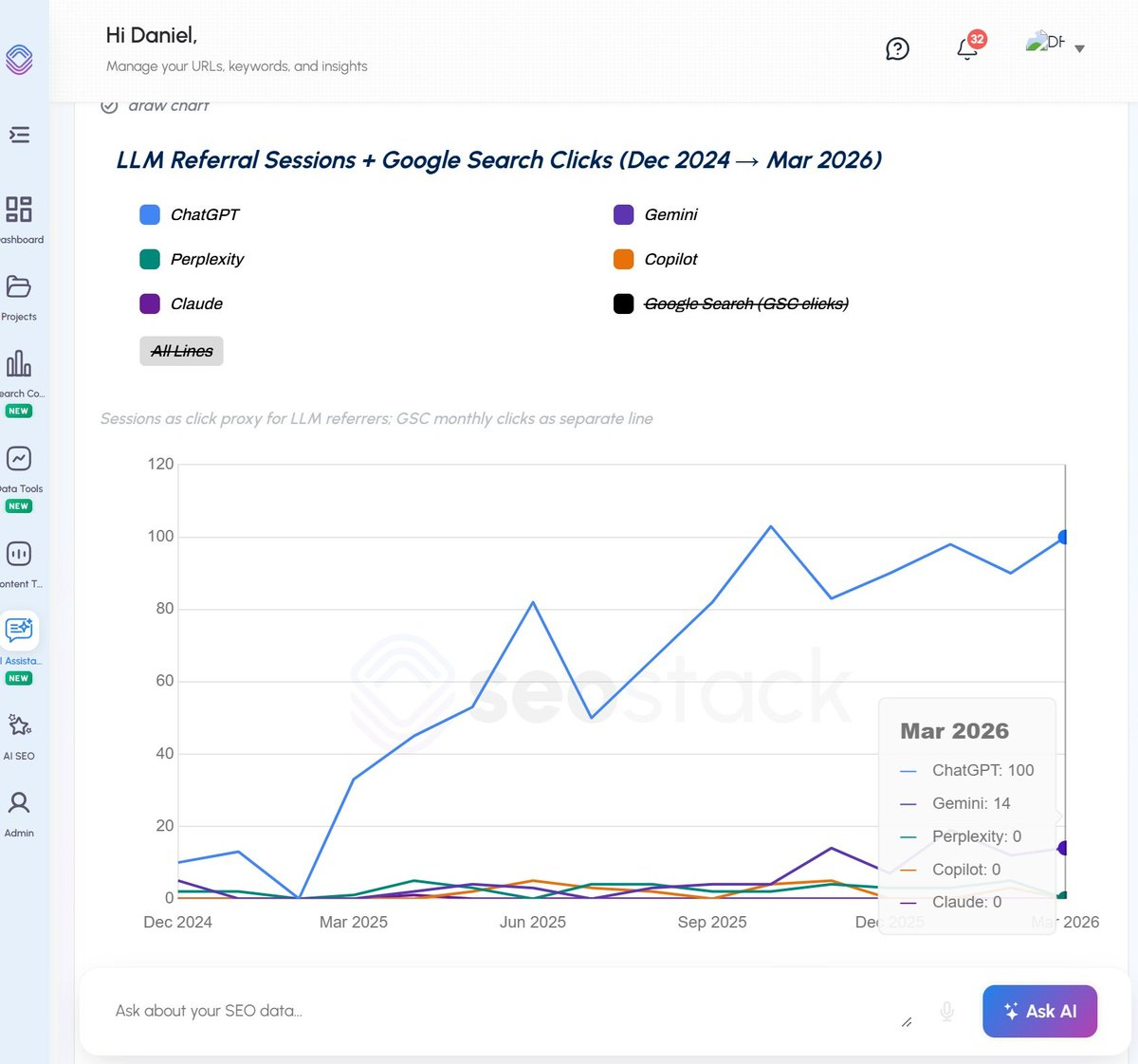

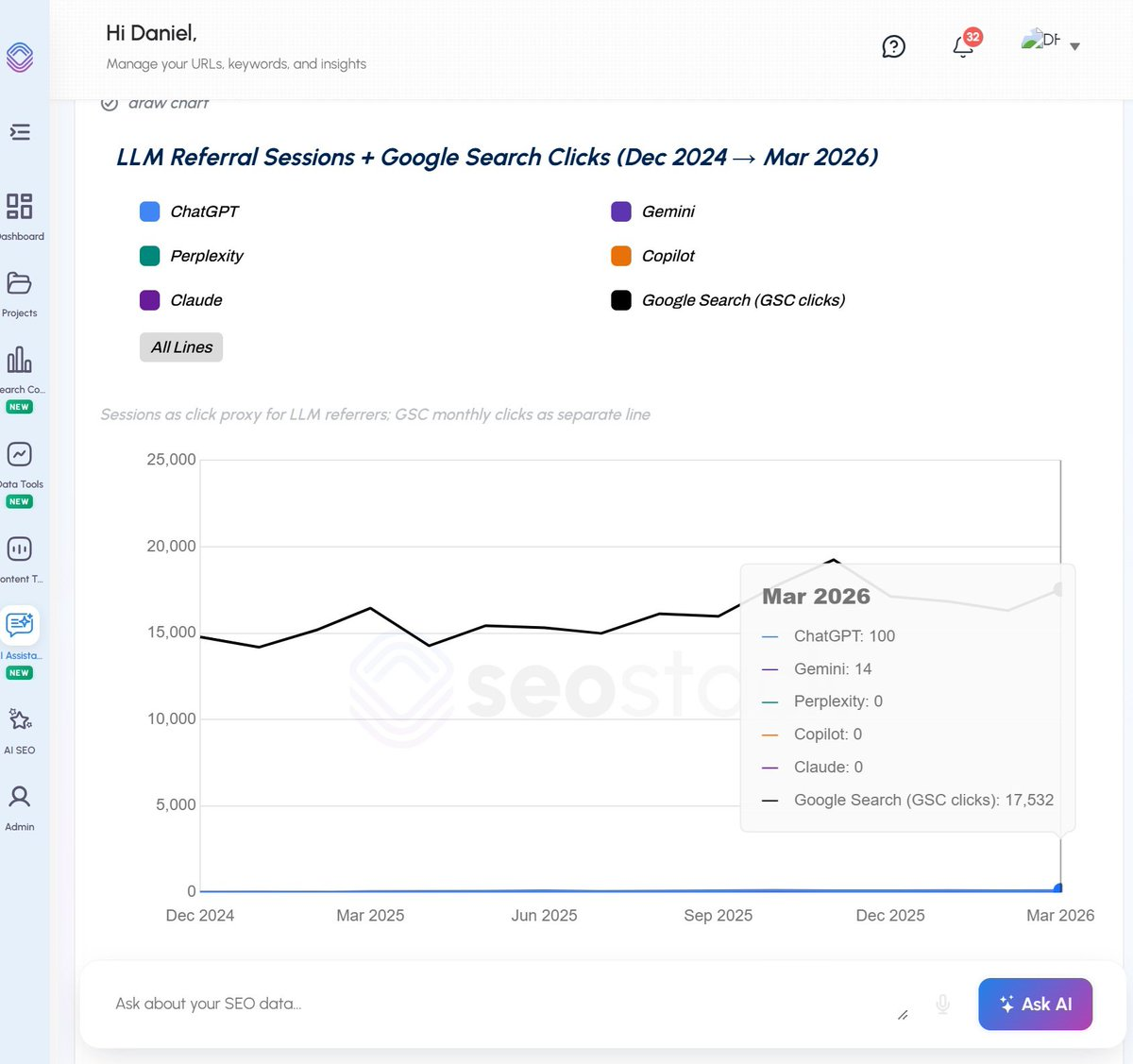

And we also have SEO stack which blends GSC + GA4 data, where we can segment out LLM traffic (by referring source or aggregated)

AI platforms give you precisely nothing.

AI referral traffic currently accounts for just 1% of global web traffic

source:Exposure Ninja and even that sliver is hard to attribute cleanly because most AI platforms don't pass referral data in any consistent way.

OpenAI doesn't give you a dashboard.

Perplexity doesn't have a Search Console equivalent.

Claude doesn't send you a monthly performance report.

You're left trying to infer everything from third-party tools that are sampling prompts and making educated guesses about citation frequency.

So when a GEO agency promises to "improve your AI visibility" what exactly are they measuring?

How are they proving ROI? If the answer is "well, we ran 500 prompts across four platforms and you were mentioned 47 times," you should be asking what that means in terms of actual traffic, leads, and revenue.

Because right now, that connection is tenuous at best.

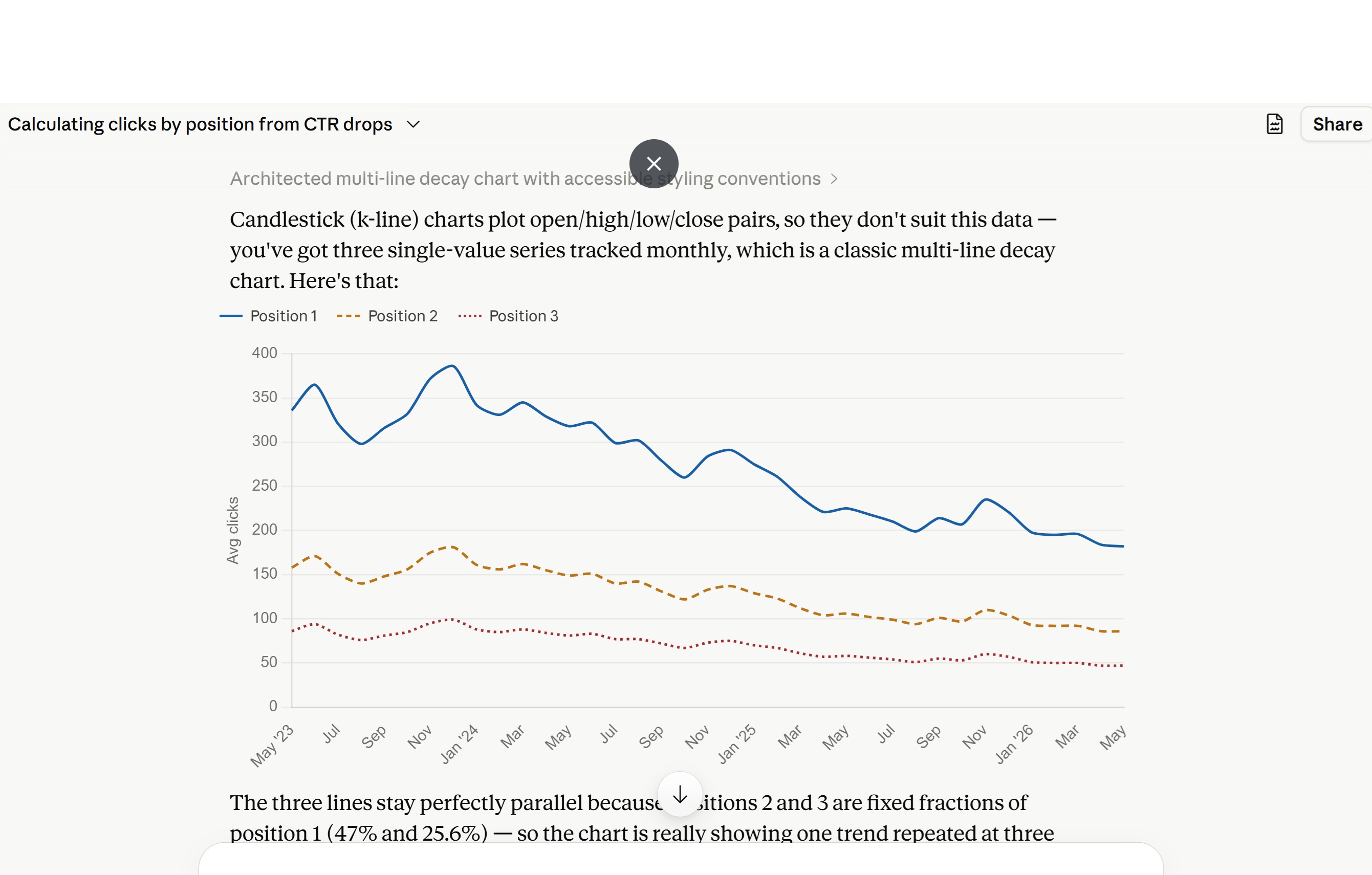

AND look at this - this isn't a joke, this is real data!

Here is an online media publications LLM traffic from GA4 >

Then, when I enabled GSC Clicks to be included:

It SHOULD put things into perspective at HOW SMALL the size of marketshare really is.

The Consistency Problem: LLMs Are Not Search Engines

This is probably my biggest technical gripe with the entire GEO framework as it's currently being sold.

LLMS ARE INCONSISTENT!

Search engines are deterministic enough that we can build robust tracking around them. Google's algorithm changes, yes, but within a given window, if you search "SEO consultant" you'll generally see the same top ten results. That consistency is what makes rank tracking meaningful.

LLMs are not built that way. They're probabilistic by design. Add to that the layers of complexity around RAG (retrieval-augmented generation), query fan-out, personalisation, conversation memory, geographic localisation, and the fact that different versions and configurations of the same model can produce materially different answers and you've got a measurement problem that's orders of magnitude harder than anything SEO has faced before.

Ask ChatGPT the same question twice and you may get a different answer with different citations.

Add memory into the mix and the response shifts based on what you've asked previously.

Add location, and a user in Manchester may get a completely different set of cited sources than someone in San Francisco asking the same question.

This isn't a minor footnote it fundamentally undermines the premise of scalable, reliable GEO tracking.

Is There Anything Actually Unique About GEO?

I want to be fair here, because I do think there are some genuinely distinct elements to how you optimise for LLM visibility versus traditional search. They're just not as dramatic or as strategically new as the GEO consultants would have you believe.

A few things that are somewhat distinct:

Citation sourcing is different.

Research from Ahrefs shows that 80% of LLM citations don't even rank in Google's top 100 (see

https://ahrefs.com/blog/ai-search-overlap/

) for the original query, and 28.3% of ChatGPT's most cited pages have zero organic visibility.

That's a real finding and it suggests LLMs are pulling from a broader, different content pool than traditional rankings. Understanding what that pool looks like and how to get into it is a legitimate GEO-specific concern.

Structured, factual, authoritative content matters more.

LLMs tend to synthesise and cite content that is unambiguous, clearly structured, and factually verifiable.

This nudges content strategy away from long-form persuasive copy and toward definitional, reference-style content which is a real tactical shift.

But, whatever you do, don't be fooled by complex wording around this such as semantic content chunking, honestly, just don't.

Brand entity strength plays differently.

In SEO, brand signals help rankings indirectly. In LLMs, brand entity recognition in training data can directly influence whether a model knows you exist at all. Getting your brand consistently referenced across authoritative sources Wikipedia, major publications, G2, industry databases matters in a distinct way.

But none of this is so alien from core SEO that it requires an entirely new discipline. John Mueller has said as much. It's an evolution of what we already do, not a revolution.

Why SEO Still Wins And Will For Some Time Yet

Let me close with the numbers, because the numbers are telling a clear story that a lot of the GEO hype conveniently ignores.

Google processes around 13.7 billion searches per day.

sOURCE: Visual Capitalist

ChatGPT, despite logging an impressive 1 billion daily searches, still lags far behind.

Google still dominates with nearly 80% of all digital queries globally, while ChatGPT commands 17%.

Even with the fastest adoption curve of any consumer technology in history, AI search is still a fraction of traditional search. And critically, when you isolate transactional searches the ones that actually drive commercial value for most businesses Google is considerably less vulnerable. People still go to Google to buy things, find local services, and conduct commercial research. That behaviour is deeply ingrained and not going anywhere quickly.

Even with fewer searches per US user year-on-year, traditional search still makes up about 10% of all US desktop activity a share that stayed nearly flat throughout 2025.

Yes, AI adoption is growing. Yes, Gartner has predicted a 25% decline in traditional search volume by 2026. But we've been predicting the death of Google before, and it has a habit of surviving its own obituaries. And let's not forget Google's AI Overviews, Gemini integration, and AI Mode mean that Google itself is one of the largest AI search surfaces in existence. Doing good SEO and appearing in Google's AI answers are often the same thing.

76.1% of URLs cited in Google AI Overviews also rank in the top 10 of Google's search results. So do good SEO, and you're likely doing good GEO for Google's AI at the same time at no extra retainer required.

The Verdict

GEO is real in the sense that AI search behaviour requires some adaptation of content strategy. It's not real in the sense that it's a cleanly definable, separately measurable, entirely distinct discipline from SEO.

The biggest problem with how GEO is being sold right now is that the measurement infrastructure doesn't exist to validate the investment. No analytics access, wildly inconsistent platform behaviour, query uniqueness that makes sampling almost meaningless, and a fragmented platform landscape that makes any unified "AI visibility" metric largely fictitious.

I've been around long enough to know what a gold rush looks like. When the tooling catches up, when the platforms offer actual analytics, when there's a consistent and validated methodology for measurement then we can have a proper conversation about GEO as a distinct practice.

Until then, make sure you've got the basics of SEO nailed down. Because the 13.7 billion people searching Google every day aren't going anywhere just yet.

Ready to transform your SEO?

Join thousands of SEO professionals using SEO Stack to get better results.

Start Free 30 Day Trial